What the goblins can teach us about enterprise AI

Given at AI for the Rest of Us (London, UK) on .

A talk built around an odd test: if an AI can run a Dungeons & Dragons game, can it run enterprise software? Coté walks through what his solo D&D AI - ChatDM - is good at, what it gets wrong, how MCP tools and journals patch the gaps, and why AI is so valuable to individuals while staying invisible at the enterprise level. Closes on a theory: AI is valuable to people, but AI is not legible to enterprises.

Slides

Recording

Further Resources

Related from Coté

Related talks

Transcript

(Enterprise) AI is a failure. Or disappointing. Or improving.

If you take the launch of ChatGPT 3.5 as a starting line, the past 30 days of AI talk has basically been a pile of horse hockey. James Governor had that Bloomberg diagram in his talk - the circular power strip plugged into itself, money circling around. People are doubting whether this stuff actually works, and there are plenty of studies about the lack of effectiveness of enterprise AI. Which is a bummer for me, since I work at one of the companies that's benefited massively from AI. I enjoy compensation as much as the next person, so I'd like this to be successful.

I've seen this movie before. My first job in 1995 was an Austin company called Shop Us where the brilliant idea was to sell cowboy boots online through a virtual-reality shopping mall. We sold a total of two boots - one to someone in Australia. Sometimes you have to wait a while for a technology to be useful. So for the sake of my portfolio and early retirement, let's say things have been improving over the last few months. Better way of looking at it.

If the robot can play D&D, it can play enterprise software

I keep saying enterprise software, so it's worth explaining. It's the software that runs our lives - banks, supply chains, payments, online banking, governments, militaries, manufacturers, pharmaceuticals. Everything except videos of cats eating sandwiches. Civilization as we know it would crumble without it. And if you look at what enterprise software actually looks like, it's very complicated processes - one stage to another, choices to make, contract negotiations, physical supply chains. The kind of thing you'd think you'd want to apply AI to.

Here's the move. One of the diagrams I've been showing isn't enterprise software at all - it's a flow chart of how to cast a spell in Dungeons & Dragons. Equally complicated, different domain, a little less boring. So my theory is: if the robot can play D&D, it can play enterprise software. Solve for the nuance there - combat, sneaking behind a pillar as a rogue, all the weirdness - and your procurement workflow gets a lot easier.

1991, then 2023

I played D&D every weekend as a kid in the early 90s with my friend Mason - very nerdy, staying up late, questionable t-shirts. Eventually friends got cars, I discovered socializing, and it fell off. Fast forward through Pivotal, then VMware, then VMware Tanzu by Broadcom, then Tanzu by Broadcom - if you've been through corporate worlds you enjoy walking through and figuring out what your identity is - and in 2023 I had four days in a South Amsterdam hotel with not much to do. So I tried this ChatGPT thing for solo role-playing. Sounds pathetic and sad, but it's actually nice. There's a whole structure for how you introduce randomness when you play on your own, and AI seemed like a great way to have a dungeon master that's always available. That's where ChatDM came from.

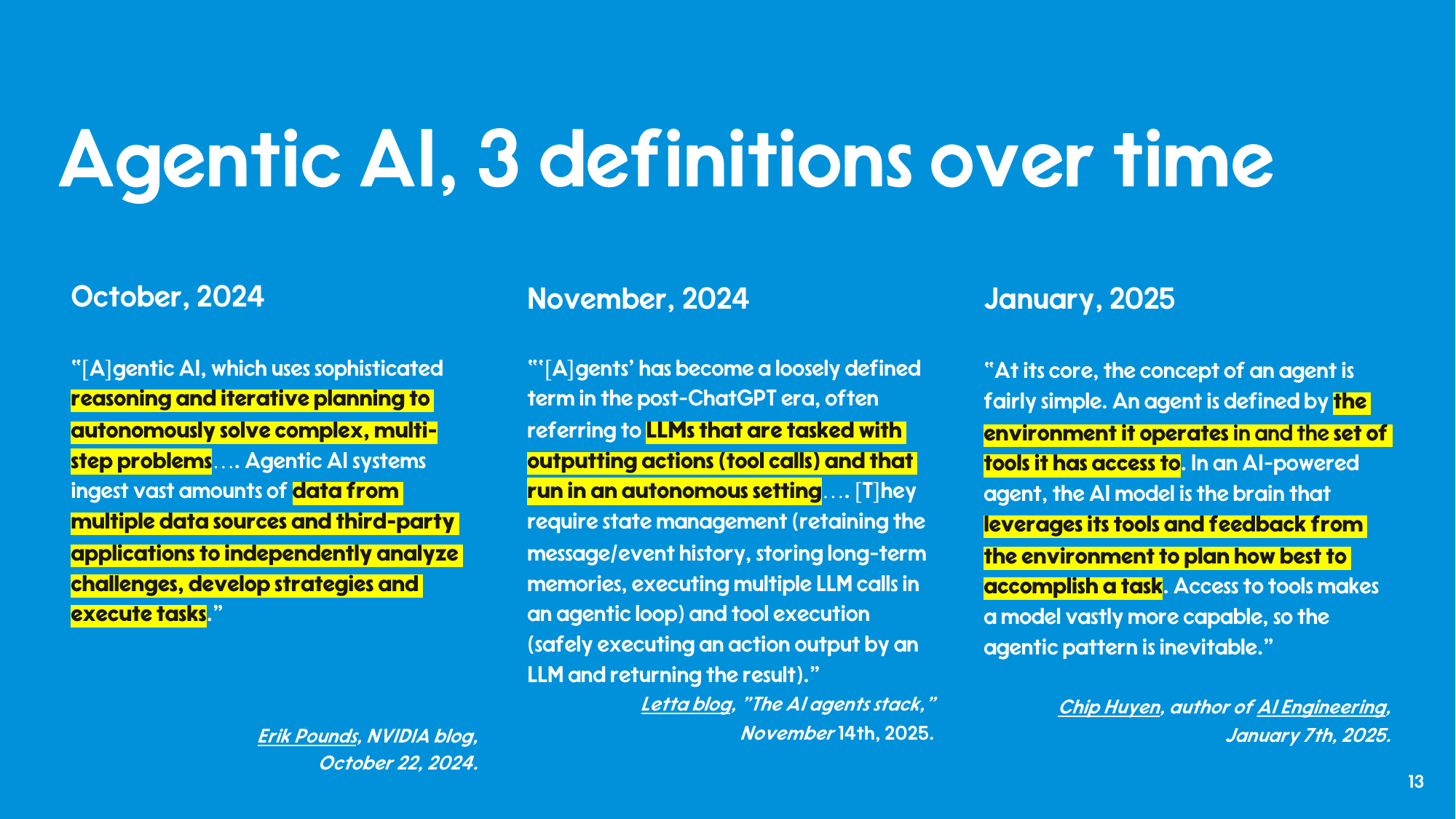

What "agentic AI" means, depending on who you ask

What you want from a D&D AI is the same list everyone in the trade is now calling agentic: creative, takes initiative, knows the rules, applies them, moves the plot, and is fun. Which lines up with the various definitions floating around. Erik Pounds at NVIDIA in October 2024 framed agentic AI as systems that use sophisticated reasoning and iterative planning to autonomously solve multi-step problems. The Letta blog in November 2025 talked about LLMs tasked with outputting actions, running in autonomous loops, with state management and tool execution. Chip Huyen, author of AI Engineering, in January 2025 went simpler: an agent is defined by the environment it operates in and the tools it has access to. Three different definitions, all pointing at the same thing - something that acts on its own, has opinions, executes tasks. Which is a dungeon master, or a player.

What the robot is good at

A few things hold up. The robot never gets bored. It's always available - which is great for solo play, especially at the end of the day when I'm a little exhausted. Our brilliant idiot in our pocket is always ready to go. It's recently gotten much more imaginative - early on it was terrible at coming up with stories, but now it'll quickly set up an opportunity-rich scenario. It's OK at following the rules. The robot has read all of the internet, including 20 years of Reddit, so unlike obscure Hungarian contract management it has a huge body of D&D knowledge to draw from. Solid B student. Goes to a good state school. And it's dirt cheap - 20 pounds, dollars, or euros a month. I shouldn't do the math on the annual figure.

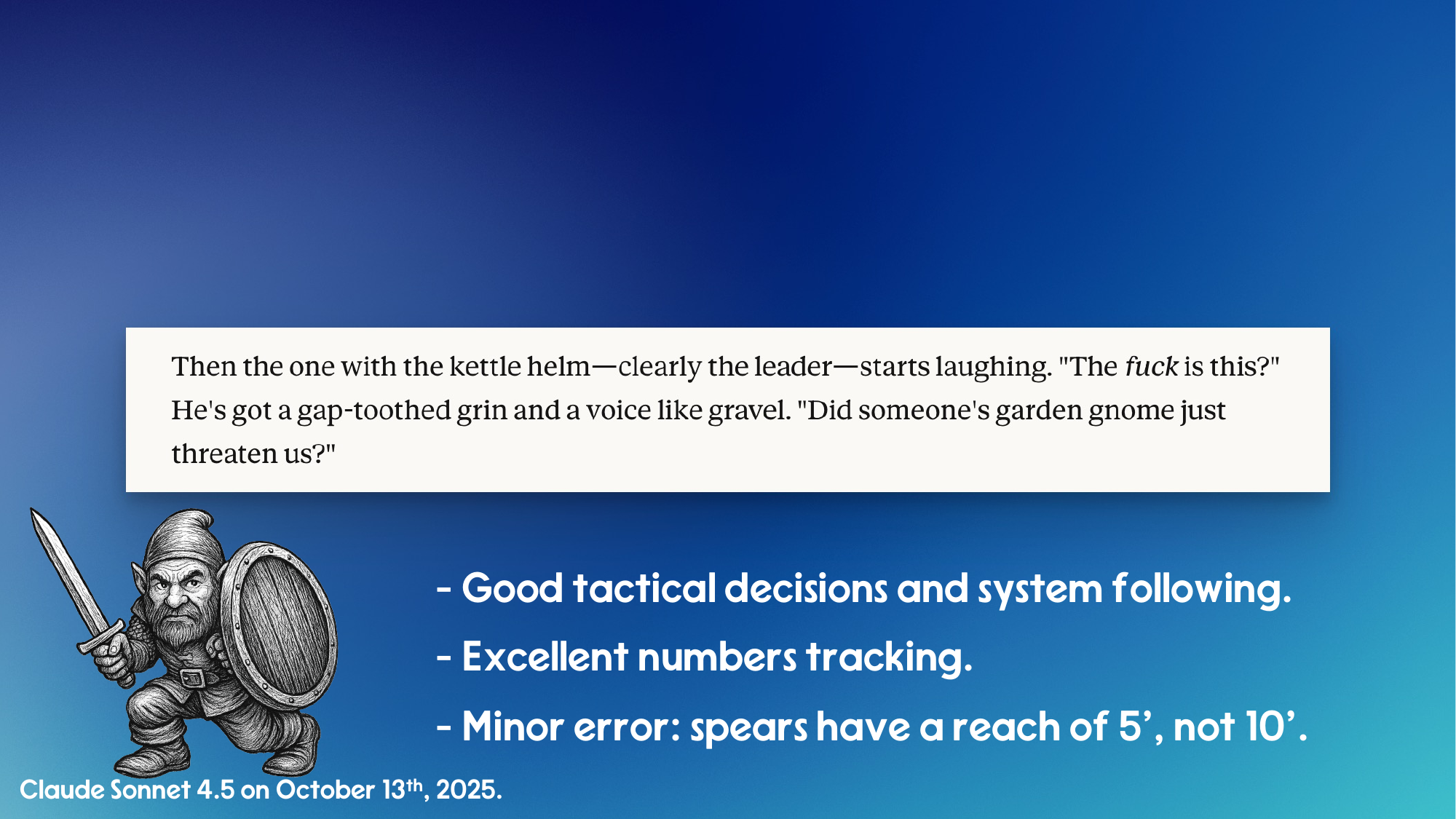

Showing AI prompts is a little like sharing your dreams - tedious for everyone but the dreamer - but here's a quick example. I run a chaotic neutral gnome fighter named Spin, because chaotic neutral lets you respond to anything without rhyme or reason and a fighter can get out of any situation without specialized gear. I drop the prompt in, Claude Sonnet 4.5 immediately throws Spin into a village being tormented by bandits (would have been cooler if it picked goblins, but bandits will do), runs decent tactics across three of them, adjusts as I drop one, and the bandits eventually back down and the villagers offer cheese and a free place to stay. Very typical D&D. The grading at the bottom of the slide: good tactical decisions, excellent numbers tracking, minor error - spears have a reach of 5 feet, not 10.

What the robot is bad at

The robot is predictable, boring. I'm no expert on how AI works, but I'm pretty sure it picks the most likely next thing. So if you've played D&D and you're going down a road and there's a dead horse, a fallen tree, or a curve in the road, you already know what happens next - you're getting ambushed by goblins. Same with taverns. Walk into a tavern and there's a mysterious hooded figure in the corner. Goblin ambush is cliche number one, hooded figure in the corner is number two. You have to specifically tell the AI not to do that.

It's clumsy. It tells you what's about to happen - the goblin's stats, its tactics, what it's going to do next - which a player isn't supposed to know. It's passive, mostly. And it forgets. I've been playing these characters for two years and the AI doesn't remember everything that happened, the way a human DM would. Same problem you'd have with a contract you've been negotiating for a year.

Two specific failures worth calling out. First, data leak: the AI cheerfully narrates what the goblin in the bushes is doing while it's still hidden, exactly the information the player isn't supposed to have. Second, it skips special features. Goblins have Nimble Escape, which lets them disengage as a bonus action - which is what a goblin in trouble should do, because goblins, like most of us, don't want to die. The AI just keeps it attacking instead.

Tools and plugins to fix the gaps

Solo role-playing has a long, rich tradition of tools - call them thought technologies - for injecting randomness and getting around the AI's predictability. The most famous is the Mythic Game Master Emulator, which is the nerdiest phrase I have ever said out loud. There's also the Plot Unfolding Machine, which sounds a lot cooler. These are systems for asking oracle questions - "is there a goblin hiding in the bushes?" - and rolling dice for unexpected outcomes, NPC reactions, encounter tables, meaning tables (old, meaningful), reactions (usurp, refuse), encounters (manticore, cobbler).

These are easy to wire up as MCP tools. I've written a few in Java and TypeScript - the Spring AI MCP intro is a good starting point. Hand the AI a randomizer, tell it to call the tool, and you start getting interesting results. Same combat scene, but now the bandit leader does something more human and less mindless-automaton. To solve the memory problem you give the AI a dungeon master journal that saves state across sessions. The tools make it work better, and it's a lot more fun. Code at cote.io/chatdm.

In an enterprise context you obviously need a lot more around the AI than "I'm fighting some bandits" - compliance, security, regulation, all of that thrilling stuff. People put together platforms and stacks. More on that at TryTanzu.ai.

The "yes, but" about AI productivity

All the "yes, but" about AI productivity is right. It's a lot of work to get the effortless thing - to borrow Mark Pilgrim's old line from the Python days, a lot of effort goes into making this effortless. That said: I'm programming again and it's super fun. I haven't programmed since 2005, I've been trying to get friends to teach me and they're busy with their lives, and the little guy in my pocket who's never bored is really good at teaching me. And I'm playing D&D again, even in my sad solo life. I should probably play with my kids, but they have homework. So that's my excuse.

The enterprise AI paradox

Why is AI so great for individuals and yet at the enterprise level people are saying this doesn't seem to be doing anything? Here's the way I've been thinking about it.

For a person: if you're 20% more productive with AI, you can do 20% less work. Don't tell your bosses. Leave earlier, book your trip at work, take on less responsibility. Obvious benefit to an individual.

For an organization with 4,000 ICs who are 20% more productive: now what? The most imaginative thing organizations have come up with is firing 20% of them. But what do you actually do with that aggregate productivity? Historically, enterprises haven't been great at figuring out what to do when there's a huge productivity gain. They have to sort it out over time.

I think it's because it's hard to know, manage, and see the value each individual is having and how it bubbles up to the top. AI is valuable to people. Yes, but, AI is not legible to enterprises. In Seeing Like a State (James C. Scott, 1998), "legible" means comprehensible and manipulable to centralized authority - the process by which complex, local, lived realities get simplified into standardized forms a state can record, monitor, and control. The individual sees the value. The enterprise can't yet read it.

That's been my experience over the past couple of years. And if someone at work is insisting you do AI, tell them: first I need to play some D&D, I'll sort it all out, come back in six months.